Most organisations now have AI in the stack, whether they call it AI or not. The operational risk is not "adoption". It is unverified outputs getting treated as facts, then embedded into decisions, reports, and customer interactions. Recent research frames this shift as a move from human-originated misinformation to probabilistic system error: confident outputs without a truth mechanism (Shao, 2025). That is why "responsible AI" has to be defined as a control system: what gets used, what gets verified, who is accountable, and how errors are detected before they cause harm.

The problem with most corporate AI governance is that it over-relies on process artifacts and governance theater, while outcomes languish in accountability purgatory. Put in everyday terms, managers build a cache of paperwork, and use it to deflect responsibility away from their desks and onto "the process".

I'm not saying the process doesnt matter. Risk registers, principles, and committee sign-offs help. But they don't solve the basic mechanics of failure. Two things do:

- Evidence discipline: the system can only speak from sources you can point to, not from statistical memory.

- Data quality discipline: your "source of truth" has to be current, accessible, and internally consistent, or you are automating noise (Guillen-Aguinaga et al., 2025).

NIST’s Generative AI profile makes the same point in different language: risk management has to cover the full lifecycle, with governance tied to measurement and operational controls, not just intent statements (National Institute of Standards and Technology, 2024). MBIE’s Responsible AI guidance for businesses is similarly explicit that governance includes assessing data quality, access permissions, and proper usage controls, not just buying tools (Ministry of Business, Innovation and Employment, 2025). But what does that actually mean? These statements alone read like loose language, not prescribed definitions with consequences for failure. In my experience anytime language is poorly defined, poor outcomes follow as people re-interpret definitions to whatever gives them the most leeway.

If your organization can't show the sources, can't measure error, and can't assign accountability, then you don't have "responsible AI". You have risk in a new wrapper. This isn't a new problem created by AI. This problem is repeated any time there's a new technology being implemented and there's hype created around how easy it's going to make business. What AI is doing is offloading assumptions that were normally fact-checked by staff, and putting them into a black box with corporate friendly language like "hallucination" used to obfuscate what used to get a real person in deep trouble: fabrication of data and failure to check work.

Three examples of Risk

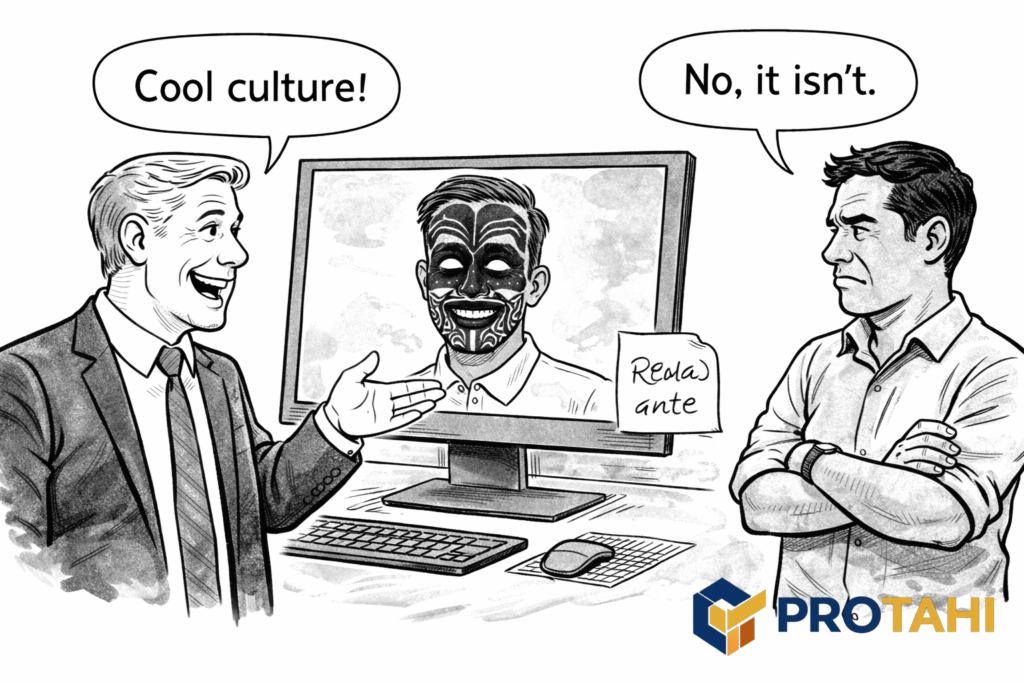

1) Cultural Harm through Appropriation and Misuse

The failure mode is simple: a generator produces culturally significant markings on a face because the model learned shallow correlations between identity, aesthetics, and internet-labeled training data. No malice required. The harm is still real, and it lands as disrespect and stereotyping. This is not theoretical. There is documented concern in Aotearoa New Zealand about casual use of moko and mataora via filters and digital overlays, treated as novelty while carrying identity and IP implications for Māori (Radio New Zealand, 2022). Indigenous data sovereignty work also frames this as a governance problem in digital environments where Indigenous heritage can be extracted, repurposed, and commodified without control (Spano, 2025).

Just telling the AI "Do not do this" is not a control.

A control is a hard constraint in the content pipeline: prohibited elements lists, review gates, and model selection that reduces the chance of identity-based stereotyping. You also need provenance of training data and clarity on what the model was trained to reproduce, and what guardrails are in place preventing unauthorized productions that fall outside of scope. If you cannot answer those, you cannot claim cultural safety.

This is one place where "we will be careful" is operationally meaningless. Yet it is today the the de-facto accepted response. It's like BP saying "We're sorry" after dumping oil into the Gulf of Mexico. Yes, it's that ridiculous.

2) Hallucinated Citations in Paid Deliverables

The Deloitte incident is the cleanest modern case study because it is mainstream, high-stakes, and measurable: official reporting containing fabricated references and erroneous material, leading to a partial refund and public scrutiny (Associated Press, 2025; Fortune, 2025; The Guardian, 2025). Whether the company intended to mislead is irrelevant, and honestly it depends on inferring motivation. The failure is procedural: AI text entered a deliverable without a verification workflow that treats citations as evidence requiring validation.

These fabrications then entered externally facing reports, and became part of the public space. Real businesses used advice based off of falsified data to create strategy. If you're thinking "Well, Deloitte needs to improve its processes. They deserve this" then you're partially right. But you're also missing the point. Deloitte got caught. How many others are not getting caught because they know how to hide sloppy research, or the fabrications simply have not been uncovered yet?

This is exactly what the academic literature warns about: hallucinations and confident falsehoods are an inherent risk pattern when systems generate plausible language without an internal truth constraint (Shao, 2025). In other words, "it reads well" is not evidence.

Any report, policy paper, or advisory deliverable that cites evidence needs a citation integrity pipeline. That means reference verification, source retrieval, and reviewer accountability. If AI was used in drafting, the process must assume the draft contains errors until verified. Treat it like untrusted input, and just like you would with a human, engage in rigorous peer review.

Don't just verify that the source exists and that the link is valid. Verify what the documents actually say. AI depends on metadata, and it will misquote sources while otherwise passing integrity checks.

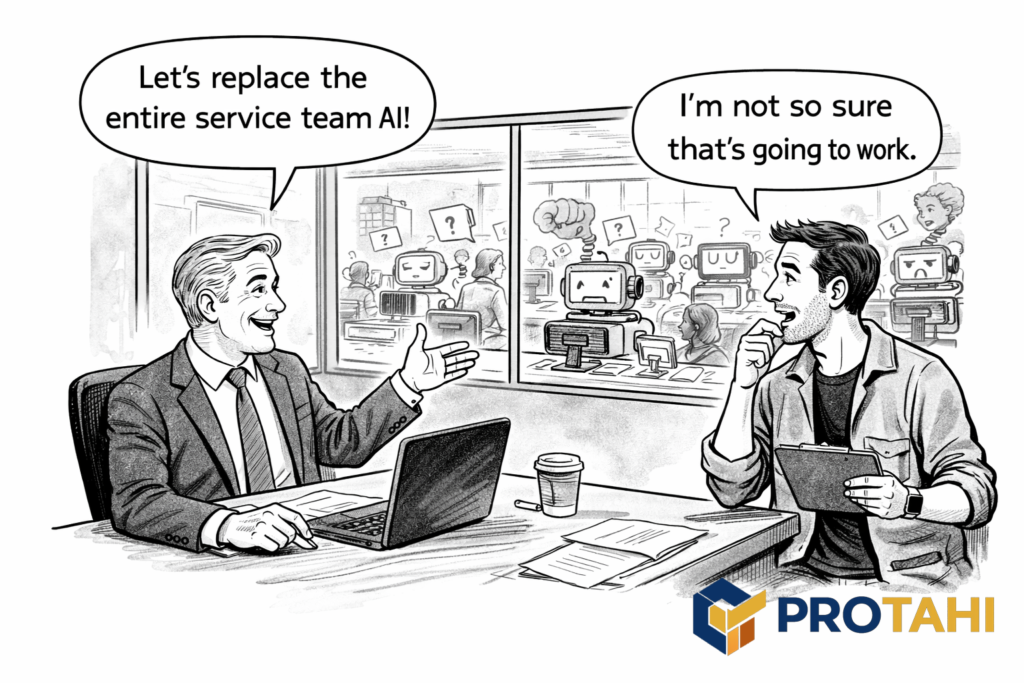

3) Customer Service Agent Automated Knowledgebases

Many organizations are rushing into knowledgebase-sourced AI pilots as if the model will compensate for weak information management. It won’t. If your knowledge base is poorly structured, inconsistently maintained, or retrieval-unfriendly, you don’t get “smarter support”. You get a system that sounds confident while it guesses. This can be a disaster when customer service teams are engaging in high-stakes situations and are poorly trained and AI dependent, or worse: when the front line customer service agents have been replaced by chatbots.

Retrieval-Augmented Generation (RAG) is used specifically to ground an LLM’s answers in organisational sources and reduce hallucinations. Evidence shows RAG can reduce hallucination relative to standard generation models when retrieval and grounding are implemented well. (Ayala & Bechard, 2024). But the control surface just moves. Retrieval-augmented systems can still hallucinate, and retrieval-stage errors remain a major cause. (Zhang et al., 2025). That is why the recent mitigation literature focuses heavily on retrieval quality and retrieval-to-generation alignment, not just prompt hygiene. (Zhang, 2025)

So the trap is not “use RAG”. The trap is “point RAG at content that was never operated as a dependable knowledge system, audited for completeness, and tested on untrained humans for repeatable solution production”. When documents lack clean structure and metadata, retrieval becomes brittle. The system can return irrelevant fragments, and the model then stitches a plausible answer around them. You can even end up with citations that look legitimate but do not actually support the claim. NIST treats this as a real risk pattern by explicitly calling for verification of RAG data provenance and verification of sources and citations in outputs during deployment and ongoing monitoring. (NIST, 2024).

Customer service AI lives or dies on knowledge operations. Ownership, versioning, and content age are things human agents learn to work with inside of their roles. They then follow escalation rules when the systems don't work. Current enterprise RAG research is already moving toward metadata-driven approaches because treating enterprise knowledge as flat chunks is not enough. (Khan et al., 2025). However, current LLMs are often pushed toward producing an answer even when uncertain, because common training and evaluation setups reward “helpful, plausible completions” more than abstention. That’s a core reason hallucinations persist: when the system is unsure, guessing can score better than saying “I don’t know.” (Kalai, Nachum, Vempala, & Zhang, 2025; OpenAI, 2025).

Separately, escalation behavior is not automatic. Unless the product is explicitly designed and trained to abstain, route, or hand off, models tend not to volunteer “I can’t do this, escalate,” and they often underproduce “I don’t know” responses. (Kwon et al., 2025).

Augmentation first, automation second. That's how we think things should be done. Start by making the knowledge base usable for humans, then deploy RAG over the same controlled sources with evidence logging and routine verification. Use it to speed up human response, not replace slow human workers with error prone AI workers that act as a gatekeeper for smaller human teams.

If you cannot prove where an answer came from, and prove that the source supports it, you do not have responsible AI in service. You have a liability that talks well.

Protahi’s responsible AI approach

This is the practical delivery loop we run. No theatrics. No loose language like "principles" without proper human-managed controls and human accountable reporting chains.

- Scope the Consequences

Identify where errors cause harm: safety, legal exposure, cultural harm, financial loss, client impact. Map the blast radius. - Lock down Sources of Truth

Establish what the system is allowed to use as evidence. If sources are not current, consistent, and owned, fix that before adding automation (Guillen-Aguinaga et al., 2025). - Choose an Auditable Architecture

Prefer grounded approaches where outputs can cite and link to controlled sources. Make as many fields machine readable and indexed as possible. RAG is often appropriate, but only when retrieval quality is measured and monitored (Ayala et al., 2024; Mala et al., 2025). - Build Verification into the Workflow

For reports and executive outputs: citation checking is mandatory. For customer service: sampled audits, escalation triggers, and clear human override. NIST’s GenAI profile supports lifecycle risk management tied to measurement and monitoring, not just policy statements (NIST, 2024). - Put Cultural Constraints in the Pipeline

If certain motifs or representations are prohibited or sensitive, enforce that in generation constraints and review gates. Do not rely on "good intentions". Māori concerns around digital misuse of moko and mataora show why (RNZ, 2022). - Run a Pilot with Real Measurement

Track accuracy, deflection quality, complaint types, rework, and escalation rates. Adjust the KB and rules before scaling.