Organizations are adopting AI in hiring to solve scale, speed, and consistency problems. The promise is straightforward: reduce bias, process more candidates, and make better decisions using data (Rigotti et al., 2024; Fernandez-Mateo, 2025).

In practice, the opposite is happening.

AI is increasingly being placed at the front of the hiring process, where information is least complete and judgment matters most. Systems are asked to screen, rank, or exclude candidates before any human has meaningfully engaged with them. Humans are then brought in later to review outputs, explain outcomes, or justify decisions already made (Fernandez-Mateo, 2025).

That placement error is the core problem.

Hiring isn't primarily a data classification task. It's a judgment task under uncertainty. Candidates rarely present as complete or comparable data points. CVs are uneven, experience is contextual, and capability often shows up indirectly. Empirical hiring research consistently shows that human evaluators rely on contextual interpretation, trade-offs, and forward-looking judgment that are not fully captured in formal criteria (Rigotti et al., 2024; Fabris et al., 2025). None of that knowledge is explicit. Asking for it to be cleanly encoded is like asking to ride a unicorn.

AI systems can't do this honestly. They operate on what is written, structured, and historically legible. When information is missing or ambiguous, modern language models don't pause or rely on intuition. They infer and generate plausible structure. This behavior isn't a defect. It's a consequence of training and evaluation regimes that reward fluent completion over abstention (Kalai et al., 2025). While some may argue that this is no different than human inference, it is fundamentally different because human processes have an ethical compass that acts as a bridge, allowing us to compromise between reality and desire. LLM"s do the opposite. They use their datasets to attempt to reshape reality into what is desired.

When you put that behaviour at the gate of hiring, you don't remove bias or subjectivity. You formalize it early, at scale, and out of sight (Fabris et al., 2025). This creates an aggressive exclusion filter that has manifested itself in a hiring crisis that has been building since 2018, when ATS systems first started implementing AI-like complex filtration and scoring mechanisms.

The Results Speak For Themselves

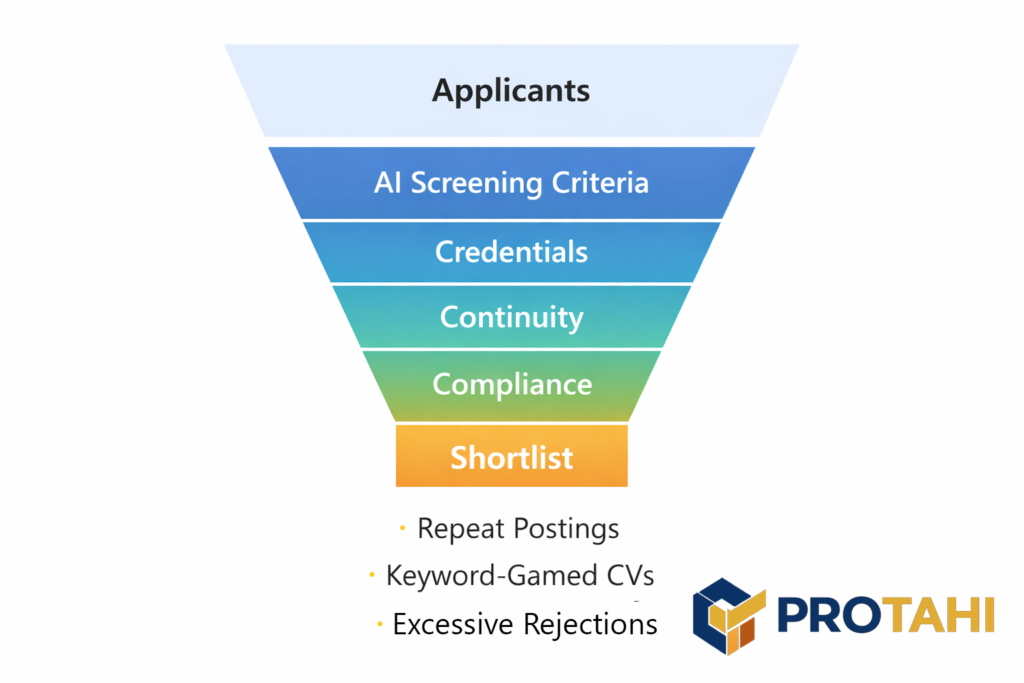

The practical outcome is a hiring funnel optimized for paper fit. This has led to repeat job postings that go unfulfilled, AI based ATS hacks that produce fabricated candidate experience trying to hit all the key words, and a referral culture that makes up for the shortcomings of the data driven systems companies have introduced to try to automate hiring.

This happens because AI screening systems tend to reinforce narrow definitions of suitability, privileging:

- Credentials over capability

- Continuity over adaptability

- Compliance over growth

This reproduces and intensifies the “unicorn” search dynamic described in organizational hiring research, where automated systems continue filtering rather than accommodating trade-offs that human managers routinely make (Fernandez-Mateo, 2025).

Humans recognize these trade-offs because they can adapt roles, redistribute work, and invest in development. AI systems cannot do this, because they have no authority to negotiate requirements or own the risk of compromise. As a result, capable candidates are excluded not because they can't do the work, but because they don't perfectly resemble historical patterns encoded in the data (Fabris et al., 2025).

This isn't new. Applicant Tracking Systems were already amplifying credentialism and keyword matching in the previous decade. What generative AI adds is scale, fluency, and confidence. Decisions that were once visibly crude are now linguistically persuasive and harder to challenge (Rigotti et al., 2024).

Meanwhile, accountability becomes diffuse. Hiring managers are asked to trust outputs they did not shape. Analysts are asked to defend decisions they did not make. Vendors promise neutrality while disclaiming responsibility. Regulatory analysis is explicit that responsibility for discriminatory outcomes does not transfer to tools or vendors (Mobley v Workday, 2024, DeStefano, 2025).

That is not efficiency. It is risk displacement.

An Inverted System

Right now the system is inverted because AI is being asked to do the parts of hiring that are least “data-like” and most human: interpreting incomplete signals (Fernandez-Mateo, 2025), weighing trade-offs, and deciding what to explore further. That inversion doesn’t remove bias, it reflects whatever the organization already rewards and encodes it into a repeatable filter (Rigotti & Fosch-Villaronga, 2024).

If past “success” has been defined through proxies like linear tenure, credential pedigree, uninterrupted employment, particular writing styles, or culturally legible career narratives, an AI screening layer tends to treat those as signal, not as context. The result can look like objectivity while quietly hardening preference into policy, then scaling it across every applicant at speed (Rigotti & Fosch-Villaronga, 2024; Fabris et al., 2025).

Generative systems add a second amplification: they don’t just score, they narrate. They can produce fluent, persuasive rationales for exclusion even when the underlying evidence is thin or ambiguous, which makes the decision feel “explained” rather than questioned. That dynamic aligns with current LLM research showing hallucination and confident error are not edge cases, they’re structurally related to how these models are trained and evaluated for plausible completion rather than truth-constrained abstention (Kalai et al., 2025).

Put together, the inversion doesn’t just automate screening, it can make exclusion more systematic and harder to see because it arrives wrapped in professional language and process legitimacy (Fabris et al., 2025; Fernandez-Mateo, 2025).

What Should it Be?

Centrally, AI should not be making people decisions. It should be supporting them. Our current structure inverts that. We have AI looking at people's personalities, how skills match up, and making inferences on whether there are crossover skills. This is causing a narrow hiring funnel where experience is rewarded for being linear, not for exposure and depth.

The correct placement for AI in hiring is behind human judgment, backing up decisions and aiding assessment of possibilities by providing information and potential use cases.

Used properly, AI performs three functions reliably:

- Organizing messy information so humans can assess it coherently

- Retrieving and comparing evidence consistently

- Reducing administrative burden so humans can focus on judgment

This aligns with emerging “human-in-the-loop” hiring models that treat AI as an evidentiary and analytical aid rather than a decision authority (Rigotti et al., 2024; Fernandez-Mateo, 2025).

AI shouldn't decide who is suitable.

It shouldn't infer potential.

It shouldn't collapse ambiguity into binary outcomes.

Humans must remain responsible for:

- Interpreting incomplete and uneven information

- Making explicit trade-offs between requirements

- Deciding when potential outweighs credentials

- Owning the consequences of being wrong

What AI would excel at in this chain is the analytics. What are the gaps in the current organizations skillset. How do certain candidates augment the bigger picture of the org, not just the current position? What is their likely career trajectory? How do all these components fit in to create a stronger candidate that can be retained and developed, rather than one who simply fills the current gap?

A more workable path doesn’t start with ripping AI out of hiring, and it doesn’t start by handing it the keys either. It starts by repositioning it. At Protahi, we tend to work with organizations at the point where this inversion is already causing friction. Roles aren’t filling, shortlists don’t make sense, and teams feel like they’re managing systems instead of people. The work then becomes less about tools and more about structure. Clarifying where judgment still needs to live, tightening how information flows into decisions, and using AI to reduce noise rather than manufacture certainty.

When AI is used to support human interpretation instead of replacing it, hiring tends to become calmer, more defensible, and more aligned with how organizations actually operate. It’s not a silver bullet, but it’s a shift that usually makes the system easier to live with, and easier to improve over time.