Let's be honest. It's difficult to find a business model going into 2026 that doesn't use AI somewhere in its pipeline. Sure, people on the front lines may not realize they're using AI. I've talked to many folks in recruitment who still loudly insist that "their" ATS doesn't have AI in it, then when I find it what they're using and look up the product specs, guess what?

The point is, AI is everywhere. And we've been using it for years. We've just been calling it something else. AI is the flashiest, newest version of "smart automation" and it helps with everything from suggesting grammar and photomanipulation to automation of complicated tasks that require an extensive database of knowledge.

The internet’s current posture on AI use is basically a purity test. Say you used it and someone will jump straight to: lazy, dishonest, stealing, fraud. This is posturing. Its reckless and largely self-serving. AI is fine when it's on the same persons mobile phone or television who's criticizing your use of it. Politics and purpose clearly are driving in two separate lanes on this topic.

Some people might claim the reaction isn't about the tool. Instead, it’s about what people have watched happen around the tool: fake citations, fabricated data, confident nonsense shipped as truth, and leaders treating outputs as “done” because they look finished. Sure. But it's about the tool too.

Because AI is misused. Frequently.

The truth is people love shortcuts, and AI gives them exactly what they're looking for. But so does pretty much every technological innovation since fire. Technology is about making our lives easier. Getting into some ethical debate over this particular piece of technology is a waste of time.

So the real question isn't “is AI allowed,” but “what kind of work is this, what’s the blast radius, and what proof do we demand before it goes live?”

AI and Data Rights

There are legitimate legal disputes about training data, licensing, and rights. That’s not imaginary, and it’s not settled. You can see the system drawing boundaries in real time, including litigation about copying content for AI-related uses and official guidance focused on human authorship and control. (Reuters, 2025; U.S. Copyright Office, 2024; U.S. Copyright Office, 2025).

But “AI use equals theft” is still a sloppy claim. Using a tool to draft, refactor, brainstorm, or structure your own work isn’t automatically wrongdoing. The ethical line shows up when people misrepresent authorship, launder unlicensed material, or ship unverified claims as fact.

If you're using AI in public, it is therefore important to disclose when and how AI is being used. This way human work and automation are not misrepresented. However its more complicated than that, because anyone could argue that any kind of automation needs to be disclosed, and under this logic they'd be right. AI's real impact here is in creative outputs. So creatively, where human insight and ingenuity can be blurred, is where AI needs to be included and acknowledged.

I use AI to help with our creation of videos and our imagery. Because it allows me to focus on work that actually matters. I'm not a cartoonist, or a trained actor. I keep my styles neutral, or clearly parody, to avoid any confusion. I never try to pass off AI generated imagery as my own work.

That's the line.

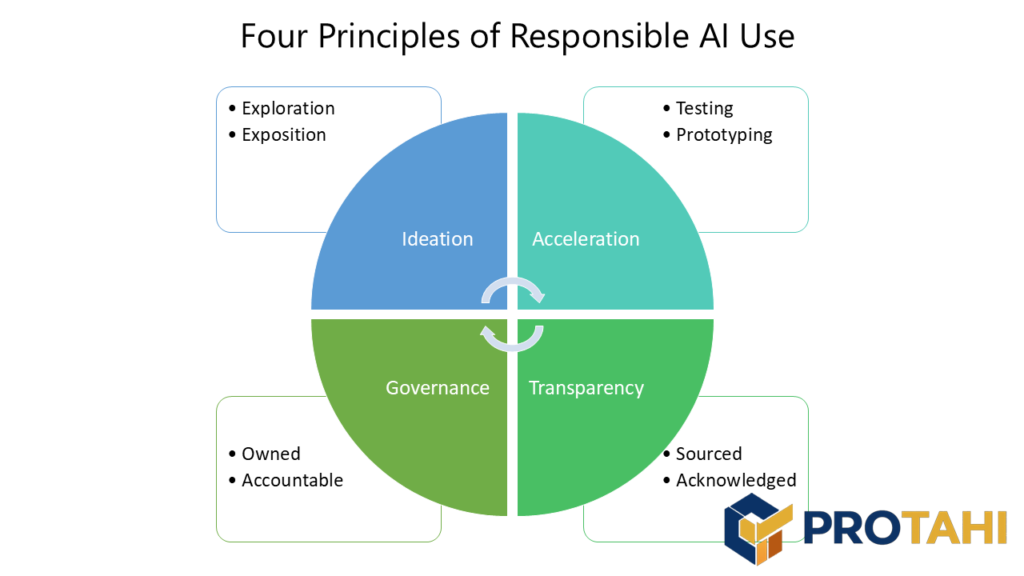

Responsible AI Use

AI is easiest to use responsibly when the work is:

- Low stakes - A mistake is annoying, not catastrophic

- Verifiable - You can prove it with tests, sources, or inspection

- Reversible - You can roll it back without harm

- Bounded - The tool isn’t making the decision, it’s assisting a human who is

- Culturally locked - The tool is not permitted to mimic culture without clear oversight and constraints

That’s basically what modern risk frameworks keep circling back to: governance, oversight, evaluation, monitoring, and clear accountability. Not vibes. (NIST, 2024).

AI is fine to use when it stays in its lane. It can speed up drafting and exploration, but it can’t be treated as evidence. If a claim needs proof, the proof has to be real, checkable, and properly attributed, because models will fill gaps when context is missing and still sound confident. (Farquhar et al., 2024) LLM-generated code that was compiled was still frequently wrong, and included security-relevant failures; “fix it” loops don’t reliably eliminate issues and can introduce new ones (Chong et al., 2024). Depending completely on AI isn't just bad social form, when put into context it's also poor business practice.

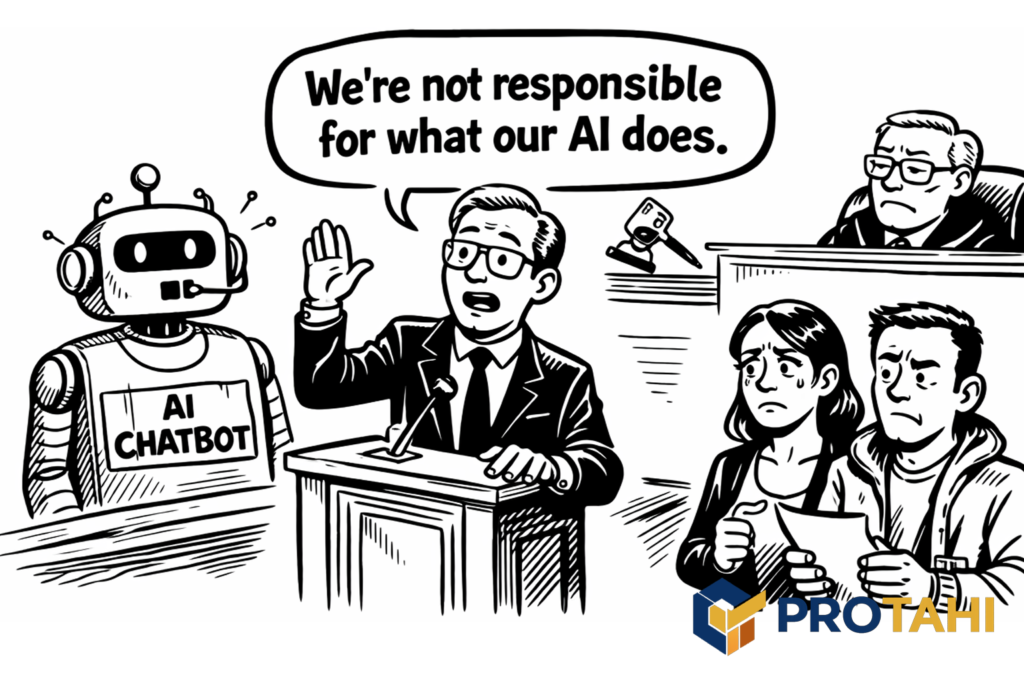

When AI touches customers, transparency stops being optional because the consequences show up fast, in money and trust. In Moffatt v. Air Canada (2024 BCCRT 149), Air Canada’s website chatbot gave a customer wrong information about bereavement fares. The customer relied on it, Air Canada refused the refund, and the tribunal found negligent misrepresentation and ordered Air Canada to pay C$812.02 in damages and fees. Air Canada even tried to argue the chatbot was “responsible for its own actions,” and the tribunal rejected that posture. The takeaway is simple: if the bot speaks to customers, the company owns what it says, and pretending it’s “just the tool” doesn’t work. (American Bar Association, 2024; McCarthy Tetrault, 2024; The Guardian, 2024).

In another instance, Google’s AI search summaries shipped and immediately produced viral, wrong outputs, including answers that drew from satirical or joke sources. Google publicly acknowledged the issue and adjusted the product. That’s what “public-facing AI” looks like in the wild: you don’t get a quiet failure mode. You get screenshots. (Google, 2024; The Guardian, 2024).

Bottom line: “we used AI” can’t be used as a shield. If the output contains wrong numbers, invented references, or claims you can’t defend, that’s not an AI problem. That’s a failure of review and accountability.

Any use of AI must be traceable to a real source, and a real human must own accountability over its use in context.

A Gut Check to Limit Exposure

AI is a good fit when:

- You can describe exactly how you’ll verify the result before you run it.

- You can undo it cleanly if it turns out wrong.

- The tool is accelerating work you already understand.

AI is a bad fit when:

- The output will be treated as authoritative because it sounds confident.

- The work affects people’s rights, livelihoods, or safety and you don’t have strong oversight.

- You’re tempted to skip evidence because the draft looks polished.

That’s the whole point. The tool compresses effort, but it doesn’t compress responsibility.

So is it OK to Use AI?

It is absolutely OK to use AI, as long as you're clear about how you're using it, and responsible for what it produces. I use AI extensively inside Tumeke Studio, but not as a substitute for judgment. We’re generating content live, then running it through an indigenous governance layer that decides what can be released, what needs revision, and what gets rejected (Tumeke Studio, 2025). That’s a high-risk setup because the failure mode isn’t “a rough draft.” It’s confident output landing in public space before anyone’s checked it, but it works because we're clear that we're doing it, and we're not trying to masquerade as an authority. We're using AI to produce live, interactive cultural fiction.

That’s why AI is only “OK” when it stays in its lane: acceleration, drafting, exploration. It can’t be treated as evidence, and it can’t be allowed to speak with unearned authority. NIST flags confabulation (hallucination) as a core GenAI risk, which is exactly the problem when context is missing and the tool fills gaps anyway. When organisations let AI talk to customers as if it’s authoritative, liability and trust damage arrive fast, the Air Canada chatbot misrepresentation case is the clean example. In engineering, the same logic applies: generated code can compile and still be wrong or insecure, so proof still lives in review and testing, not in the model’s confidence.