Case Study:

Rebuilding Technology Confidence and Compliance Readiness at East Bay Community Recovery Project

- Change Management

- Government Compliance

- Service Delivery

When I joined East Bay Community Recovery Project (EBCRP) as Head of IT and Service Delivery, the organization had effectively voted “no” on technology. Not because staff were stubborn, but because prior implementations had trained them that paper was the only dependable system. The operational cost was constant friction: delayed reporting, inconsistent records, and avoidable risk in a setting that serves high-need clients.

The timing made it worse. At that time the HITECH Act was signed into law, pushing federally connected healthcare ecosystems toward electronic records, “meaningful use,” and stronger expectations around privacy, security, and auditability (U.S. Department of Health & Human Services, 2017). For agencies relying on public funding and inter-agency coordination, the direction of travel was clear: stay paper-based and brittle, or modernize and survive (Burde, 2011).

My mandate was simple: stabilize the foundation, implement a credible EHR pathway that met the “certified technology” direction of federal programs, then rebuild staff confidence through training and reliable support. If that didn’t happen in time, solvency risk wasn’t theoretical.

Problem Area 1: Infrastructure

The infrastructure wasn’t just “old.” It was neglected to the point where failure was normal. Identity and access depended on a poorly maintained FreeBSD-based domain controller that required specialist intervention whenever something went sideways. About 130 desktops were in circulation, with flaky connectivity and aging parts that turned basic work into repeated resets. External communication and reporting were bottlenecked by a 1.5 Mbps-class DSL connection, so month-end reporting didn’t just feel stressful, it became predictable chaos because the pipe could not carry the workload.

The culture of the organization was clear: Technology was bolted on afterwards. Electronics were inconvenient. Computers could not be trusted.

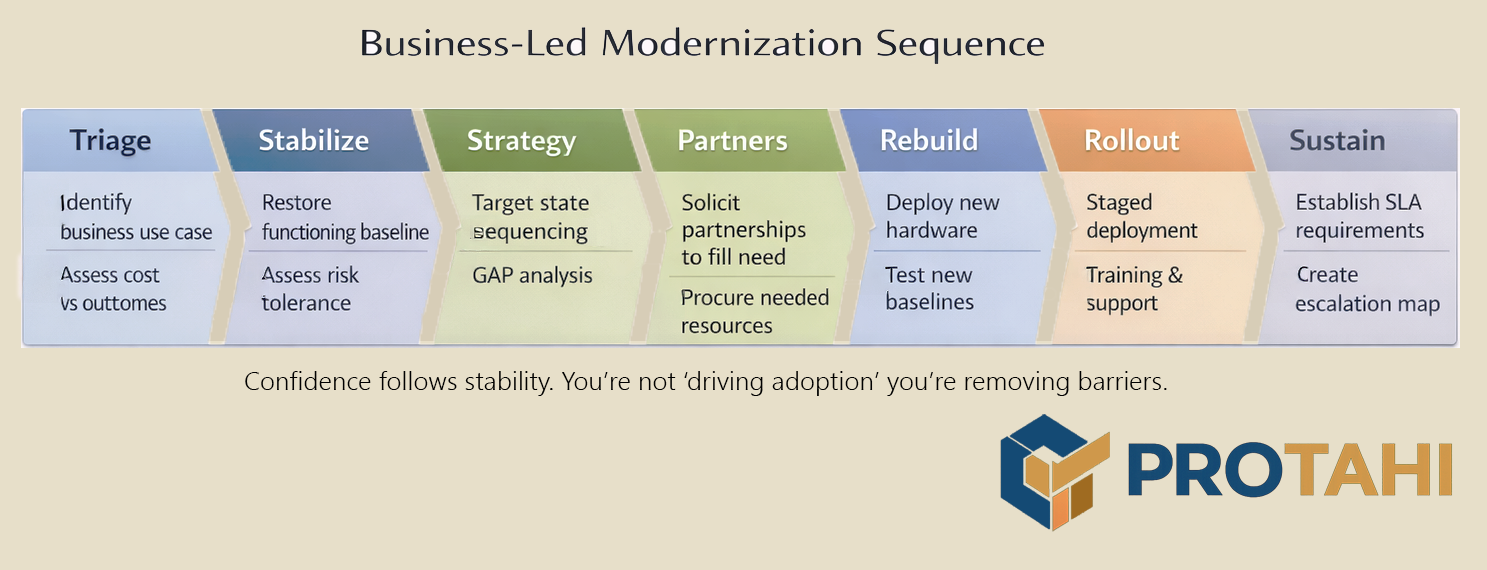

The recovery plan started with one principle: no workflow change survives on a failing foundation. So the sequence was deliberate. First, stabilize core services and remove single points of failure. Next, replace network components and endpoints that were actively training staff to distrust systems. Then, fix outbound capacity because backups, coordination, and any serious hosted strategy are dead on arrival if the connection is a bottleneck.

I partnered with VMware to support the modernization effort and rebuilt the on-site environment around resilient, HA-capable Dell server arrays, with updated networking and refreshed desktops. The point wasn’t “new shiny.” The point was predictable operations and failover recovery: services stay up, logins work, printing works, and the network stops randomly dropping out. Once that baseline existed, we could introduce remote work patterns, offsite backups, and later SaaS options without gambling the agency’s continuity on every change.

Result

Technology stopped being a daily argument. Systems became boring and predictable. This is what you need before you ask staff to trust any complex change based implementation where culture is an entrenched part of the problem.

Problem Area 2: Service Delivery

Infrastructure stability is necessary, but it doesn’t create adoption. EBCRP’s service delivery reality was that technology had no trustworthy operating model. People didn’t know what would happen when they needed help, how long it would take, or whether escalating an issue would just make it worse. So they avoided discussing it as long as possible.

In parallel, the agency’s “electronic” health record state was already compromised by an inaccurate siloed database with limited customizations and a large reliance on raw text input, so there was no trusted source of truth and no clean way to coordinate information across teams. This created an expected service delivery model of avoidance until breakage, adjusting records to a compliance state prior to audit through a cumbersome manual addenda processes, and then scramble to identify failures in a cascade state. It had to change.

HITECH-era policy direction mattered here because it reframed the problem. The bar was no longer “have a database” and "have a few computers." The bar was moving toward meaningful use of certified EHR technology and information exchange expectations, backed by incentives and program requirements (Burde, 2011; Sprague, 2015). CMS describes “Certified EHR Technology” (CEHRT) in terms of ONC certification and required functionality in the reporting period, which reinforced that this could not be another homegrown silo with no standards path (Centers for Medicare & Medicaid Services, 2024). To demonstrate this we partnered with Felton Institute, and designated a pilot program where interoperability could be tested prior to agency-wide rollout.

So I treated service delivery as its own transformation. I established a predictable support operation, then ran staged rollouts department by department. Each department went through the same cadence: stabilize their baseline workflows, configure what was needed, train the team, go live with heavy support coverage, then harden and refine. That pacing mattered because it kept failures contained and it let early adopters become internal proof that the new way worked.

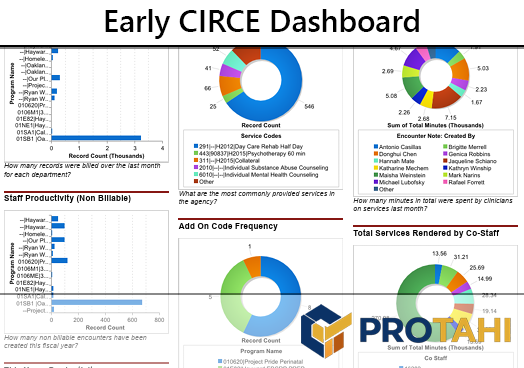

For the EHR path, I partnered with Salesforce to build an EHR capability on the platform, tailored to EBCRP’s care delivery needs and reporting realities. The significance wasn’t brand names. It was proving that the organization could move from “paper is safer” to “the system is usable and auditable,” while keeping delivery moving. Part of this work was ensuring that all data was machine readable and quantifiable. Because having "a database' isn't enough. If you can't get at some of the data because its hidden inside non-indexed text fields or misspelled, what should be data ends up becoming bloat. To do this I developed a predictive system that aided clinicians in documentation and standardized inputs, allowing internal auditing teams and management to gain deeper insights into their service delivery.

This work predated Salesforce Health Cloud, which launched publicly on September 2, 2015 (Salesforce, 2015). We began our live rollout in 2010, and this work directly led to the creation of the Health Cloud and Service Cloud platforms. It also led to the founding of an independent software development company: CIRCE Technology (funded by Felton Institute), by demonstrating in practice that platform-based approaches could support care workflows beyond a sales CRM origin. That’s an inference about pattern and timing, not a claim of sole causality.

Result

EBCRP moved from brittle reporting scrambles and untrusted data to being viewed as an innovator in the local space. This was accomplished through using a structured operating model where reporting, coordination, and records management could be executed without heroic effort every month. I began to work with other agencies such as Bay Area Community Services (BACS), where I showed their technical staff how to create similar outcomes.

Problem Area 3: End User Support

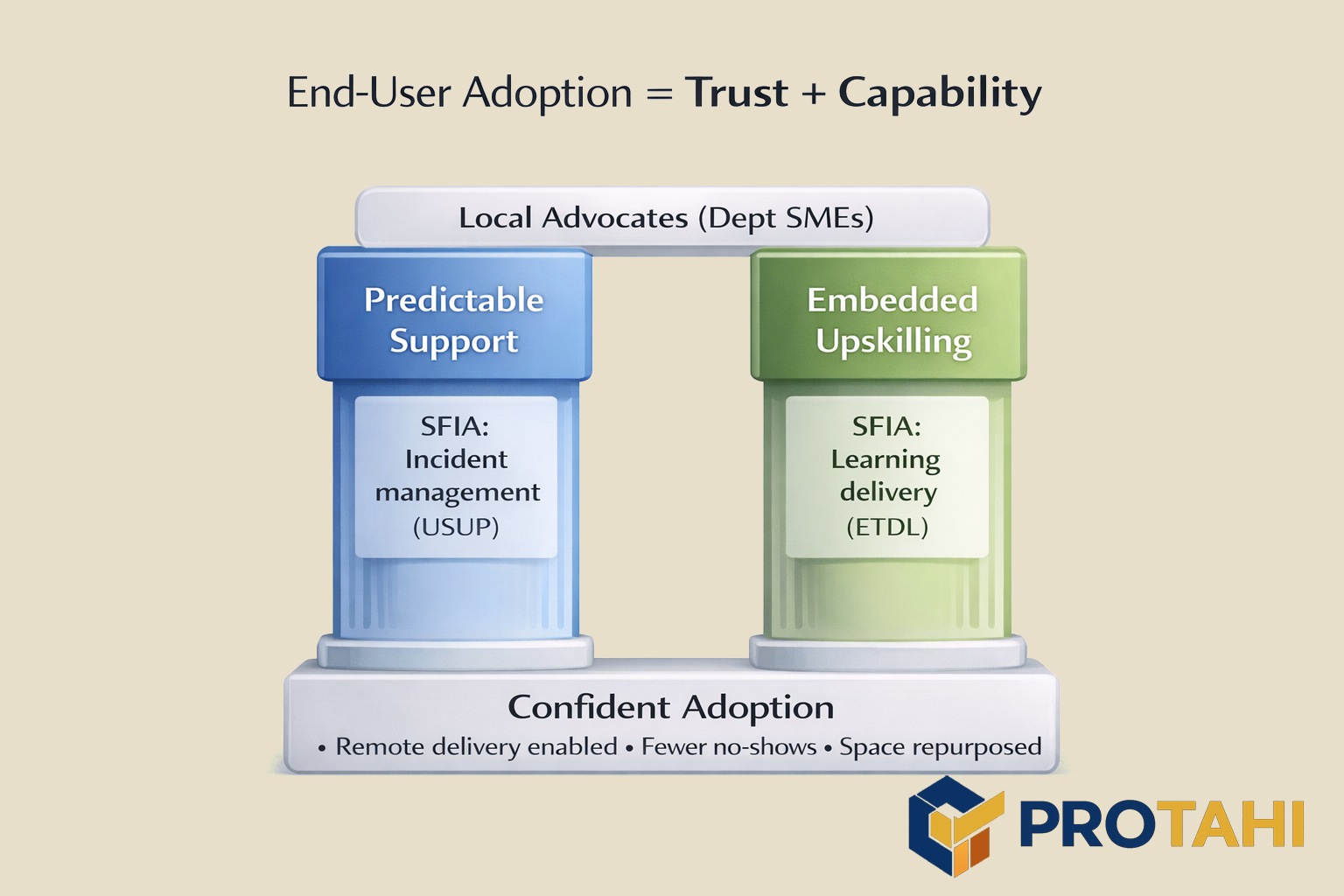

This was the real battlefield. Staff didn’t need persuasion. They needed the lived experience of technology not betraying them, plus the skills to recover when something went wrong.

So training and support were built into the work, not bolted on afterward. Every rollout included hands-on upskilling, simple job-relevant guidance, and clear communication about what would change and what would not. I avoided “IT posturing.” If the answer was “we broke something,” we said so, fixed it, and explained the prevention step cleanly. If the answer was “this is how the system behaves,” we showed it in plain language and documented it in a way people would actually use, establishing protocols that enabled people to know what to expect, and how to get the results they were looking for.

I also spent time inside departments during go-lives, and created a system of advocates within each department that became local SME's prior to rollout that was independent of management. The goal was two-fold. First, it enabled DevOps teams to get information from actual practitioners and avoid committee based sanitization. Second, it showcased that the product was usable and solved departmental problems on delivery, by having the advocate work with DevOps directly to ensure deliverables were properly aligned. This wasn’t about morale. It was about speed and feedback loops. If someone hesitated, you could see why. If a workflow step didn’t match reality, you found it immediately. If an outage happened, staff watched the response and decided whether the new system was worth trusting.

By building internal confidence in systems we were able to quickly redefine what the systems were capable of. Within 18 months of project start we had end-users engaging in remote based activities, including visiting client homes and service designated areas. This increased delivery rates, which further improved program budgets by directly addressing many causes for no-shows. Eventually some staff, including IT staff, began to work completely remotely, allowing office real-estate to become repurposed into shared work spaces and service delivery areas for clients.

Result

Confidence stopped being abstract. Staff (even elderly staff) adopted because the system worked, because support was dependable, and because they were trained to succeed instead of being blamed for not liking broken tools.