In December 1903, the Wright brothers got a powered aircraft into the air for 12 seconds and 120 feet. Aviation wasn't solved that day. It entered a proof of concept stage: people could leave the ground. Less than six years later, Louis Bleriot flew from Calais to Dover. That is the shortest crossing between England and France, about 21 miles by sea.

I'm saying this as a reminder: Big breakthroughs don't arrive as finished infrastructure. They arrive as awkward, bounded demonstrations that take years of iteration before they are trusted to carry people across real distance, in real conditions, with real consequences. A modern project manager might call this "Agile" and attempt to slap some process labels on it to create a PowerPoint presentation. But in reality it's just how things work.

AI coding tools are in the 1903 phase, but we keep talking about them like they are already in the Channel-crossing phase. We are trying to make the plane fly before it is ready, and we are doing it by collapsing the entire job of software engineering into a single visible artifact: code.

If AI can produce code, then the story goes, AI can replace the people who write it. Management in big firms are in fact rushing into this, but if you're looking at it from a purely AI perspective and not one of mega-corporate finance, then that's the wrong model of the job. Code is the output. Engineering is the selection pressure that makes the output correct, safe, maintainable, and accountable.

The practical gap shows up fast in real work. The tool can generate something that looks confident, even when it is operating on missing context. It can assume states that aren't true, contradict itself, and still present the result as if it has verified reality. That isn't a minor quirk. It is exactly why human developers aren't going away any time soon.

The Hype: “Most code will be written by AI”

In 2025, public commentary from AI leaders and business media has leaned hard into near-term dominance narratives, including claims that AI will write the majority of code within months, and that software engineering roles will be heavily disrupted. That framing is designed to feel inevitable. It’s also a category error (Duffy, 2025; Shead, 2025).

This is backed up by various hype gurus online who sucker people in with talk about how they programmed their new million dollar app in less than a week using vibes. What they're not telling you is the app didn't make them any money. What they're really doing is selling hype, and they're being paid by big companies to peddle AI as a simple solution when it's not one.

Even the more sober workforce forecasts still describe a mixed reality: disruption, churn, and skill shifts, not a clean replacement curve. The World Economic Forum’s 2025 reporting emphasizes reallocation and reskilling pressure across roles, including technology roles, rather than “no more humans needed.” (World Economic Forum, 2025)

Speed Gains, not Autonomy

There is real evidence that AI tooling can make developers faster on bounded work.

- Google ran an enterprise randomized controlled trial and estimated about a 21% reduction in time-on-task for an enterprise-grade development task when developers had access to specific AI features. The authors also explicitly flag that results can vary by tool and context. (Paradis et al., 2024)

- A separate working paper reporting three field experiments with software developers found throughput effects when developers were given access to a generative AI coding tool. (Cui et al., 2025)

Those are meaningful gains. They also don’t imply replacement, because none of this demonstrates end-to-end ownership of real software outcomes: interpreting intent, negotiating constraints, controlling risk, managing change, and being on the hook when it breaks.

Put bluntly: “faster keystrokes” is not “finished systems.”

Why “AI can code” Still Doesn’t Mean “AI can engineer”

I've tested AI based programming tools myself a number of times over the last 12 months. In each one the tool generated confident fixes while being wrong about the environment and wrong about what was actually happening. It assumed conditions that weren’t true, conflicted with itself, and failed to converge until I forced fed it individual steps that could only be ascertained by a competent programmer, and told it precisely where to check for errors. Until then it chased its own tail, essentially guessing at solutions and rewriting the same broken code until guided out of its loop.

That pattern isn't a one-off. NIST treats “confabulation” as a core generative AI risk category, and it explicitly recommends verification practices during testing and monitoring to manage it. (National Institute of Standards and Technology, 2024)

Independent research is aligned with that risk posture. A 2024 Nature paper focuses on hallucinations and “confabulations” as a practical barrier to adoption, and it frames the problem as unreliability that must be detected and handled, not trusted through. (Farquhar et al., 2024)

So the question becomes simple: if a system can generate plausible output that is sometimes wrong in non-obvious ways, who carries responsibility for correctness? In real engineering environments, the answer is still “a human operator and a human organization.”

Long-Horizon Work is Where it Fails

Coding assistants look strongest on short, local tasks. Real engineering is long-horizon and cross-cutting: multiple files, multiple services, backwards compatibility, test strategy, risk containment, and production constraints.

That’s why software engineering agent benchmarks matter, even if they are imperfect.

- SWE-bench Verified is a curated subset designed to ensure the issues are actually solvable from the problem statement, and OpenAI reports state-of-the-art performance figures against that benchmark as something materially below full coverage, not “it just does the job.” (OpenAI, 2024/2025)

- SWE-Bench Pro (2025) explicitly targets long-horizon tasks that “may require hours to days for a professional software engineer,” and reports that model performance remains well below full success under its evaluation setup. That is the shape of the problem that determines whether replacement is real. It currently isn’t. (Deng et al., 2025)

This is the core rebuttal: the work that defines engineering value is exactly the work that doesn't reduce cleanly to a single prompt-response loop.

More Speed Means More Risk

“Works on my machine” was always a trap. “AI wrote it” is just the new version.

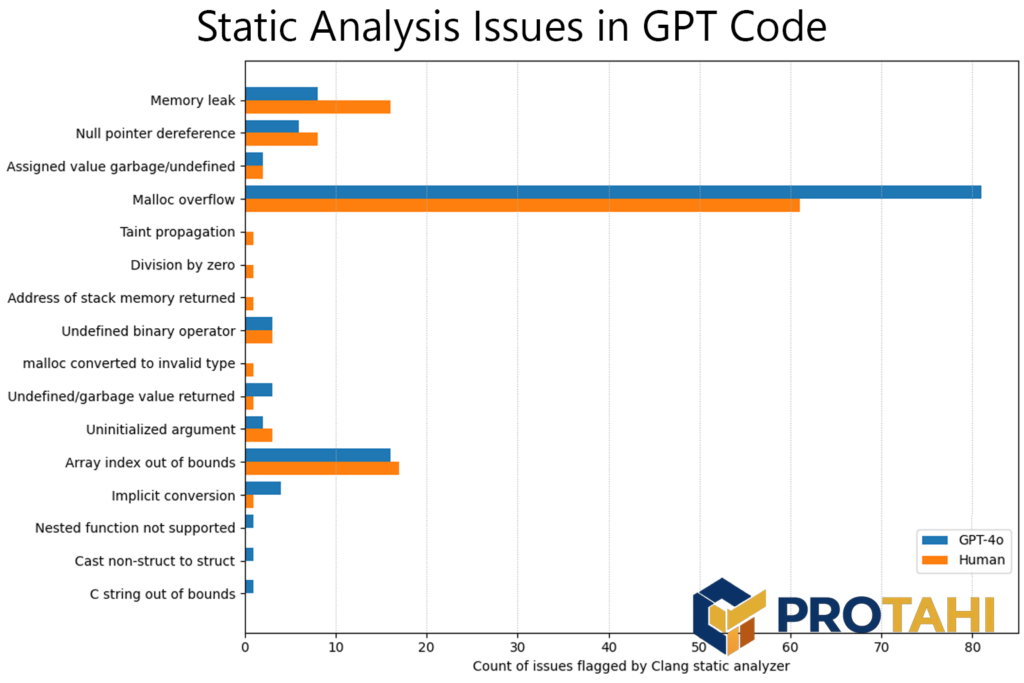

A 2024 study on LLM-assisted secure code generation shows that models can produce insecure or incorrect security-relevant implementations, including cryptographic mistakes, and that outcomes depend heavily on how developers interact with and validate the outputs. That is the opposite of replacement. It’s a demand for stronger review discipline. (Chong et al., 2024)

If your organization treats AI output as authoritative, you are trading visible speed for invisible risk. That trade is not acceptable in any serious environment.

Don't Try to Imitate the Big Players

AI can be useful. The failure mode is letting it become the decision-maker.

Use it like any other powerful tool:

- Keep humans accountable for requirements, architecture, and acceptance criteria. If no one can state “what done means,” AI can’t rescue you.

- Require normal engineering hygiene: code review, tests, static analysis, threat modeling, and rollback plans. AI doesn't change the need for these controls. It increases the need for them.

- Log prompts and outputs for reproducibility and post-incident learning. NIST explicitly emphasizes governance, pre-deployment testing, provenance, and incident disclosure as practical control areas for generative AI systems. (National Institute of Standards and Technology, 2024)

- Treat AI as an accelerator for drafts, not an authority for decisions. It can propose. It can’t own.

A wrench can speed up your work. It can't decide what the building should be, whether the beam is load-bearing, or whether the bolt is shearing under stress. AI is the same. It’s a tool that amplifies competent operators and punishes complacent ones.

Until AI can reliably carry responsibility for outcomes across long-horizon systems, under real constraints, with real consequences, it’s not replacing coders. It’s reshaping how coders work. This is how team building needs to be approached.

Yes, the big players are cutting large amounts of staff. They have the budgets to push boundaries, and their strategy is simple: reduce until it breaks, then rehire. Most businesses don't have the luxury of deep pockets to play in this type of sandbox. For the majority of us, we need to keep our expectations realistic.